Thursday, September 21, 2006

Friday, May 12, 2006

Rocket renaissance

ALMOST two years ago, SpaceShipOne became the first privately-built vehicle to travel into space. Although the X-15 did something similar four decades earlier, the simple, safe design and low cost of SpaceShipOne was significant—for the first time the cost of a spacecraft fell within the price range of companies and wealthy individuals, rather than just governments. That’s one reason why today at least half a dozen private spaceships at various stages of design and construction. Today, rocketry is going through a renaissance.

ALMOST two years ago, SpaceShipOne became the first privately-built vehicle to travel into space. Although the X-15 did something similar four decades earlier, the simple, safe design and low cost of SpaceShipOne was significant—for the first time the cost of a spacecraft fell within the price range of companies and wealthy individuals, rather than just governments. That’s one reason why today at least half a dozen private spaceships at various stages of design and construction. Today, rocketry is going through a renaissance.Stalking through the corridors of the International Space Development Conference (ISDC) in Los Angeles this year, are the men who are going to build a new generation of spaceships. While promises about cheap space travel for the masses have been made before, this time things seem different. Besides the birth of SpaceShipOne, there has been a recent shift in US legislation to encourage personal spaceflight by simplifying government licensing requirements, straightening uncertainties over liability issues and allowing paying customers to fly at their own risk in novel craft that might be dangerous.

Most importantly, though, there is money. Lots of it. More than $1 billion has been committed on ships and infrastructure. The state of New Mexico, for example, has recently started work on a purpose-built $225m spaceport, of which the state has committed $135m to funding, even though it has nothing yet to fly. The spaceport is intended to provide a launch pad for commercial operations of the second-generation suborbital vehicle SpaceShipTwo—currently being designed in Mojave, California and which may start test flights as early as next year. The commercial development of this vehicle, in itself, is costing its financial backers, Virgin Galactic based in London, $240m. One study suggests that after five years of operation, New Mexico spaceports will have led to $1 billion of spending within the state.

Meanwhile, in Dubai, another new spaceport costing $265m was recently announced by space tourism company Space Adventures, based in Arlington, Virginia. Of this sum, $30m has been committed. (Space Adventures itself, in the last five years, has made $120m in orbital spaceflight sales—taking wealthy businessmen such as Dennis Tito, to the International Space Station with the help of the Russians.) Many other spaceports, too, are being planned around the world for an anticipated new generation of tourist vehicles, some of which are still on the drawing board or have only recently come off it. But overall, the signs lead many to suspect that a lot of money is being spent on rocketry and infrastructure that has not yet been revealed.

All this enthusiasm is being generated by plans by individuals and private companies to build a variety of suborbital spaceships--craft that travel just to the edge of space and back again. Which one, though, is best and who will succeed? This is the topic of a two-page article in this week's Economist. There is also a useful chart comparing all of the main designs.

I've been researching this piece for some time and a large array of overmatter has accumulated. I'd like to thank Charles Lurio, a space consultant, and Roger Launius, a curator at the Smithsonian Air and Space Museum, for their help as their comments were left on the cutting room floor. Charles is particularly knowledgeable about hybrids and solids. He also reckons that the Space Adventures vehicle, still in design, is a solid-based rocket.

___________________________________________

Rocket renaissance

The Economist

May 11th 2006 | LOS ANGELES

The era of private spaceflight is about to dawn

TWO years ago next month space travel underwent its Wright-brothers moment with the first flight of SpaceShipOne. The roles of Orville and Wilbur were played by Burt Rutan, who designed the craft, and Mike Melvill, who flew it—although they were ably assisted by Paul Allen, one of the founders of Microsoft, who paid for it. Of course, history never repeats itself exactly. Unlike the brothers Wright, who were heirs to a series of heroic failures when it came to powered heavier-than-air flight, Messrs Rutan and Melvill knew that manned spaceflight was possible. What they showed was that it is not just a game for governments. Private individuals can play, too. (more...)

___________________________________________

Overmatter:

XCOR on why it chose an air-launched suborbital

Jeff Greason, president of XCOR Aerospace, a small aerospace company based in Mojave, California, is developing a two-person, ground-launched suborbital rocketplane. Ground launch, he says, is technically more difficult but has significantly lower unit operating costs. “We are doing it this way because our engineering tradeoffs lead us to believe it is optimum for us for our market, resources, for the skills we have. Does that mean everybody else’s idea is bad? Of course not.” The XCOR vehicle, which will be a derivative of a previous design called the Xerus. As of last October, the company has money from private investors to start work.

Space Adventures on its vehicle plans

Of the six most prominent vehicles being developed (see chart), two are launched from the air. SpaceShipTwo will launch on a purpose-built high altitude aircraft known as Eve, built by aviation designer Burt Rutan. And although plans for the Explorer have not been fully disclosed, Eric Anderson, president and CEO of Space Adventures, says it will launch from the top of a high-altitude Russian research plane the M-55X. The project, he says, will be fully funded by a venture capital group belonging to wealthy, space-enthusiast and entrepreneurial Ansari family. The Myasishchev Design Bureau, an experienced Russian aerospace organization based near Moscow, with oversight from the Russian Space agency, will build it.

Rocketplane on the stability of its XP

At first sight, the notion of converting a Learjet to a suborbital vehicle fills some with horror. However, as Charles Lauer explains, there is actually very little left of it except the fuselage. “Starting with the existing Lear gave us a frame of reference to be able to build from and saved us a year in terms of schedule,” he says. It has a new delta wing, a new v-tail, and wind tunnel studies show it has “natural stability” on re-entering the atmosphere says Mr Lauer. SpaceShipOne solved the problem of stability on re-entry with a revolutionary technique of flipping its wings in half—something called the feather. Mr Lauer says the XP has this but “without having to reconfigure our airplane twice in the course of a flight profile and that’s a more sensible way to fly”.

George Whittinghill, chief technologist for Virgin Galactic, responds, "SpaceShipTwo can re-enter in any attitude, even upside down. The feather acts like a shuttlecock, and will right the ship as soon as it hits air, without any action from the pilot. I seriously doubt that the Rocketplane can re-enter in any attitude, hands-off, righting itself. Its attitude control jets will have to position the ship just right, or it will get into trouble later on during the entry.

Dennis Tito on liquid fuels

Dennis Tito, an entrepreneur and the world’s first space tourist, says ultimately liquid fuel will make a suborbital more reusable because it is possible to “gas and go”. In other words, operations can be more aeroplane-like and this will lower costs. XCOR’s ship seems quite likely to be the smallest, and cheapest, of all the new suborbital ships. Although no timeline has been announced, one potential customer reckons that they could fly within a year and a half. The ship is also intended to fly four flights per day.

Charles Lurio on rocket design

With their premixed fuel and oxidizer, solid propellant systems have always raised safety questions. They’re tricky systems to master, as evidenced by the literally explosive difficulties that the then-Soviets had in developing them for ICBMs. As a result the USSR retained primarily liquid fueled missiles long after they were minimal parts of the US arsenal. NASA, driven by the desire to get the Space Shuttle program funded, convinced itself that solid boosters (with a lower upfront development price) were acceptable despite long-standing anxiety about the safety of putting people atop them. Of course, ‘turning off’ a solid in an abort condition - let alone restarting it in flight - is essentially impossible. Hybrids do appear to have a higher safety potential, not merely by separating fuel from oxidizer but because they can be shut off by cutting off the flow of oxidizer. As with solids, however, they have much larger combustion chambers than do liquid motors. That volume effectively ‘stores’ combustion energy, which, as one person dryly put it, “can go somewhere inappropriate in a failure [condition].” But the greatest unease that I and others have with the hybrid results from the relatively miniscule amount of existing experience in building and operating them compared to either solids or liquids. As I’ve noted, Brian Binnie had to cope with significant accelerations and vibrations while SpaceShipOne’s motor was firing (see page 60 of the February 6th Aviation Week). There are design and flight adjustments that likely would ameliorate those effects for commercial passengers. By contrast, while a solid’s acceleration can also be moderated (though not in real-time) it essentially unavoidably produces what’s not-so-fondly referred to as the ‘paint shaker effect.’ Of course, reentry forces (Mr. Binnie experienced 5.5g’s) are not directly dependent on the ascent propulsion system, and are malleable with aerodynamic design and chosen flight paths.Some of the initial passengers on the suborbital flights will want to primarily liquid fueled missiles long after they were minimal parts of the US arsenal. NASA, driven by the desire to get the Space Shuttle program funded, convinced itself that solid boosters (with a lower upfront development price) were acceptable despite long-standing anxiety about the safety of putting people atop them. Of course, ‘turning off’ a solid in an abort condition - let alone restarting it in flight - is essentially impossible.

Hybrids do appear to have a higher safety potential, not merely by separating fuel from oxidizer but because they can be shut off by cutting off the flow of oxidizer. As with solids, however, they have much larger combustion chambers than do liquid motors. That volume effectively ‘stores’ combustion energy, which, as one person dryly put it, “can go somewhere inappropriate in a failure.” But the greatest unease that I and others have with the hybrid results from the relatively miniscule amount of existing experience in building and operating them compared to either solids or liquids.

Tuesday, April 25, 2006

Reefer madness

Is marijuana medically useful? Many people, from patients to doctors and scientists think so. The US Food and Drug Administration appears to disagree. These ideas are in line with what the Whitehouse, Drug Enforcement Administration, and some vocal congressmen think. However, in eleven states around America compassion hasn't taken a back-seat to politics and laws allowing medical marijuana have been passed. More on this subject in this week's Economist...

Is marijuana medically useful? Many people, from patients to doctors and scientists think so. The US Food and Drug Administration appears to disagree. These ideas are in line with what the Whitehouse, Drug Enforcement Administration, and some vocal congressmen think. However, in eleven states around America compassion hasn't taken a back-seat to politics and laws allowing medical marijuana have been passed. More on this subject in this week's Economist...Reefer madness

Apr 27th 2006

Marijuana is medically useful, whether politicians like it or not

IF CANNABIS were unknown, and bioprospectors were suddenly to find it in some remote mountain crevice, its discovery would no doubt be hailed as a medical breakthrough. Scientists would praise its potential for treating everything from pain to cancer, and marvel at its rich pharmacopoeia—many of whose chemicals mimic vital molecules in the human body. In reality, cannabis has been with humanity for thousands of years and is considered by many governments (notably America's) to be a dangerous drug without utility. Any suggestion that the plant might be medically useful is politically controversial, whatever the science says. It is in this context that, on April 20th, America's Food and Drug Administration (FDA) issued a statement saying that smoked marijuana has no accepted medical use in treatment in the United States. (more...)

________________________________________________

Update (12th May)

In response to this article, we have received a letter from John P. Walters, director of the White House Office of National Drug Control Policy--also known as the "Drug Czar". I would expect The Economist would publish this on its letter's page in the near future.

Commentary & comments from other blogs:

This article has inspired a lot of commentary, some of it useful.

One commentator here discusses the FDA's existing programme to supply cannabis medicinally. (more...)

From the Democratic Daily (here...)

Overview of the debate, Politics and Pot.

A critique of the Reefer Madness (here...)

More commentary, and my reply to one critique of the piece (more..)

Overview of the debate, Politics and Pot.

And a few more comments (here..)

________________________________________________

References

Nicoll, Roger & Alger, Bradley, 2004. The brain's own marijuana. Scientific American. December 2004.

Wilson, Clare, 2005. Miracle Weed, New Scientist, vol 185, issue 2485, p38.

Neuroprotection by delta-9-tetrahydrocannabinol, the main active compound in marijuana, against ouabain-induced in vivo excitotoxicity. The Journal of Neuroscience, 2001, 21 (17): 6475-6479.

Inter-agency advisory regarding claims that smoked marijuana is a medicine. FDA, April 20, 2006.

Medical Marijuana. American Medical Association, June 2001.

Marijuana and medicine: assessing the science base. 1999. Institute of Medicine.

Press releases

Doctor suggested cannabis for pain relief, says one in six medicinal users in the UK. Press release from McGill University.

Cannabis-based medicine relieves the pain of rheumatoid arthritis and suppresses the disease. Press release from Journal of Rheumatology, Oxford University Press.

Cannabis extract reduces pain in multiple sclerosis patients. Press release from British Medical Journal.

UK trial results on value of cannabis for multiple sclerosis patients. Press Release from the Medical Research Council.

Brain’s own cannabis compound protects against inflammation. Press release from journal Cell.

Hebrew University scientists develop prototype drug to prevent osteoporosis. Press release from Hebrew University of Jerusalem.

Cannabis-based drugs could offer new hope for inflammatory bowel disease patients. Press release from University of Bath.

Groups

Food and Drug Administration

Drug Enforcement Administration

Office of National Drug Control Policy

The National Organization for the Reform of Marijuana Laws

Multidisciplinary Association for Psychedelic Studies

Medical Marijuana ProCon.org

GW Pharmaceuticals

Marinol

Monday, April 17, 2006

The magic of nano

Nano-watchers were quick to raise the question of whether this is the first example of a harmful nanotechnology product. This time, at least, it does not appear that nanotechnology is to blame. MagicNano was released in two versions: a pump spray and an aerosol spray. While the product inside the packaging was identical, only the aerosol spray caused respiratory problems. So it seems likely that the problem is down to the propellant in the aerosol.

This finding will not give much comfort to anyone. Companies making products that include nanoparticulates would very much like firm guidance from governments as to what tests they need to perform to demonstrate their product is safe. Governments, meanwhile, seem to be waiting for advice from scientists.

Has all the magic gone?

Apr 12th 2006

A nanotechnology product is recalled in Germany after health concerns (more...)

Saturday, April 08, 2006

The drug trial that went wrong

This important story about a drug trial that went wrong in London has almost been buried by the alarm over a single case of H5N1 in a swan in Scotland. In the middle of last month, six people were taken seriously ill--with multiple organ failure--after taking part in a small clinical trial of an antibody treatment called TGN1412.

This important story about a drug trial that went wrong in London has almost been buried by the alarm over a single case of H5N1 in a swan in Scotland. In the middle of last month, six people were taken seriously ill--with multiple organ failure--after taking part in a small clinical trial of an antibody treatment called TGN1412.The mishap was so serious that Britain's Medicines and Healthcare products Regulatory Agency (MHRA), a government body, swiftly launched a full inquiry. On Wednesday of this week it announced interim findings. The trial had been run correctly, doses were given as they were supposed to, and there was no mistake in manufacturing. In other words, there was something unexpected about the drug itself, despite testing on animals and human-cell cultures, and despite the fact the doses given to humans were only 1/500th of what had been given to animals.

This is a difficult result for the drug business because it raises questions about the right way of testing medicines of this kind. TGN1412 is unusual in that it is an antibody. And it is an unusual antibody as well. Unlike all other antibody drugs it is what is called a “superagonistic” antibody, designed to increase the numbers of a type of immune cell known as regulatory T-cells.

Reduced numbers, or impaired function, of regulatory T-cells has been implicated in a number of illnesses, such as type 1 diabetes, multiple sclerosis and rheumatoid arthritis. Boosting the pool of these antibodies seemed like a good treatment strategy. Unfortunately, that strategy fell disastrously to pieces and it will take a little longer to find out why.The result highlights concerns raised in a paper just published by the Academy of Medical Sciences, a group of experts based in London. It says there are special risks associated with novel antibody therapies. For example, their chemical specificity means that they might not bind to their targets in humans as they do in other species.

The MHRA has decided that a working group of experts is now needed to decide how trials of this sort of drug can be handled more safely. Its main concern is how to test protein molecules that have novel modes of action--particularly in the immune system. While this expert group is working (it could take around three months) the MHRA will authorise further trials of compounds of this kind only after taking advice.

Tales of the unexpectedApr 6th 2006

Why a drug trial went so badly wrong

IN ANY sort of test, not least a drugs trial, one should expect the unexpected. Even so, on March 13th, six volunteers taking part in a small clinical trial of a treatment known as TGN1412 got far more than they bargained for. All ended up seriously ill, with multiple organ failure, soon after being injected with the drug at a special testing unit at Northwick Park Hospital in London, run by a company called Parexel. One man remains ill in hospital.

(more.. subscription required)

Wednesday, March 29, 2006

Battle of Britannica

In this week’s science section we follow the fight between the journal Nature and the encyclopedia Britannica. The argument is about a comparison that Nature did between two encyclopedias: Wikipedia and Encyclopedia Britannica[1].

In this week’s science section we follow the fight between the journal Nature and the encyclopedia Britannica. The argument is about a comparison that Nature did between two encyclopedias: Wikipedia and Encyclopedia Britannica[1].I don’t intend to weigh in either way on the debate about whether an open-source encyclopedia can approach the accuracy of an edited one. I'm not an information scientist. But as a science journalist, I'm interested in two issues: one is whether Britannica really is as error-laden as the Nature study seems to suggest; the other is whether Britannica really is only 30% more accurate than Wikipedia.

This has been a fractious debate, so let me set out my stall. That way it is clear where I’m coming from and anyone who suspects I have an axe to grind in either direction will at least have some facts to base their conclusions on. Firstly, I think Wikipedia and Britannica are both great. Very different. But wonderful resources.

At the outset, I also ought to declare that I worked at Nature as a news journalist in 2000. So I have a lot of respect for the idea that led to this study, and the efforts that were taken to make it happen. It’s a fascinating question that none of us had thought to ask before: how accurate are science articles in Wikipedia when compared to their equivalents in the gold-standard Britannica?

Unfortunately, answering this question is not as straightforward as it may seem. And while experts may have been involved in the reviews, the methodology was designed, and the data collated, by journalists, not scientists. The results may have been published in Nature, but they were published in its front half as a news story—not the back half where the original science is published. So the first thing to emphasise is that the study is not a scientific one in the sense that one would normally expect of a “study in Nature”.

For anyone interested in the minutiae of the debate, it is worth looking at Britannica’s 20-page review of the Nature study[2]. Ignore the moans about the availability of study data and misleading headlines, and go straight to the Appendix. Here you will find that Britannica has taken issue with 58 of the “errors” that reviewers reported finding in their tome. Reading these it is difficult to avoid concluding that about half of the errors Nature reviewers have flagged up are actually points of legitimate debate rather than mistakes. This is important because if 47% of the "errors" in Britannica can actually be ignored, then rather than having 123 errors in 42 articles (about 3 per article), it has only 65 (about 1.5 per article). A big difference for Britannica’s reputation.

Nature has a good answer to this[3,4]. Look, they say, we were not trying to count the actual number of mistakes but compare the relative accuracy of each encyclopedia. Sure, reviewers will have noted lots of mistakes, that we counted as errors, but which are in fact correct or debatable. But because each reviewer was not told which article came from which encyclopedia they would have been just as likely to make the same kind of mistakes (and the same number) when reviewing Wikipedia articles. In other words, because the study was done “blind”, there is no evidence of systematic bias that would alter the conclusion that Britannica was 30% more accurate.

The next question is whether the study was done in a way that would not introduce any systematic bias. Here, I had a few concerns. One of the first things that bothered me about the study was that some data, by necessity, had to be thrown away. That data was when reviewers declared that an article was badly written. My worry is that one of the things that was being counted was misleading statements. What I wondered was whether there was a point at which a lot of misleading statements simply became a badly written article and therefore unmeasurable? Wikipedia articles are often badly written, so I wondered if it was simply easier to count misleading statements in Britannica's better written articles. I don't know the answer to this, so I raise it only to flag the point that making these comparisons is not straightforward.

Britannica’s disputes 58 errors[5]. At The Economist, myself and a researcher counted roughly 20 errors of omission[5]. Although Nature says they took articles of "comparable" length, this would not have always been possible. Articles on the Thyroid Gland, Prion and Haber Process are all simply shorter in Britannica. Yet in all of these subjects the reviewer cited an error of critical omission something they would have noticed when comparing the longer Wikipedia article side-by-side with the Britannica article. In another case, Paul Dirac, Britannica claim that reviewers were sent an 825 word article. If true, this would have been compared with a 1,500 word article in Wikipedia--yet Britannica is given another error for not mentioning the monopole in its 825 word article. In Wikipedia this gets a 44 word mention in an article of twice the length. This doesn't seem fair. However, I can't replicate this finding, because as of writing, both the articles available online on both sites at the moment are 1,500 words and mention the monopole.

A reviewer is faced with two articles, one which is slightly longer, a bit more rambling, where facts have been bolted in over the years with no eye for comprehension. The other is edited for style and readability, and streamlined to include the facts that are vital in an article of that length. The problem is that when these two articles are compared side-by-side, it will be hard for a reviewer not to notice that one article has a fact or a citation in the rambling one that the more concise one doesn't. What makes this more problematic is that there is no control for this test. We don't know if a reviewer would have actually even noticed that this "critical fact" was missing had it not been in the Wikipedia article. So my concern is that in the Nature study Britannica is more liable to be unfairly criticised for errors of omission--simply because of the different nature of the information sources.

This kind of error is important because it would have an impact on the relative accuracy of each encyclopedia. For example, assuming for the sake of argument that all Britannica's errors of omission were the product of a systematic bias this would mean that Britannica was 50% more accurate, not 30%. Warning: this is an example, not my estimation of the true difference between the two encyclopedias.

Another concern is in the way the data were compiled. Nature’s journalists were not always able to find identical subject entries in both encyclopedias. When that happened, they did an online search on the subject at both websites and, if necessary, bolted together bits from several articles. (Britannica complains that in some cases they used paragraphs from a children’s encyclopedia, and a Yearbook article).

Unfortunately, this collation of data was not done blind. When Nature’s journalists compiled the material, they knew which encyclopedia it came from and could well have introduced an unconscious but systematic bias while collating the material. In addition, because Wikipedia has 1m entries and Britannica has only 124,000 [6], it is possible that reviewers were more often sent complete Wikipedia articles to compare with cobbled-together Britannica articles than the other way round. This might be particularly important when counting omissions in articles. How do we know that the omissions are truly the mistakes made by Britannica or ones made by the journalists?

Finally, it is important to note that this isn’t, technically, a comparison of encyclopedias but rather a comparison of what the average user would find if they searched for a subject online at Wikipedia or Britannica. (This is actually only made clear in the supplementary information that was published a week after the initial article.) It may be true that the average online user is just looking for information, but it is also true that we rate that information according to where we found it. If I downloaded something from a children’s encyclopedia or a Yearbook article I’d know to judge it in that context. The reviewers were not aware of this distinction, so there is no way of knowing how it might have affected their judgement.

In conclusion, I’d say that I don’t think the Nature study has proven, to my satisfaction at least, that Wikipedia is 30% less accurate than Britannica. I was also left with the impression that the study was trying to compare apples with oranges.

I have great respect for the fine editors and journalists at Nature, and I don’t think for one minute that they deliberately fixed or cooked the results[7]. I just think the study doesn't quite demonstrate what it says it does.

Nor is this a critique on the fine work of the Wikipedians. I’ll continue to read it, and cite it[8]--but I’ll also continue to check everything I use. The Economist article, free only to subscribers, is available here.

References

1. Internet encyclopedias go head to head. Jim Giles, Nature 438, 900-901. December 15, 2005.

2. Fatally flawed. Refuting the recent study on encyclopedic accuracy by the journal Nature.

3. Encyclopedia Britannica and Nature: a response. March 23, 2006.

4. Britannica attacks… and we respond. Nature, Vol 440. March 30 2006.

5. March 2006. 58 debated errors, about 20 errors of omission

In total, myself and a researcher at the Economist counted (independently of each other) in the Britannica report, 58 examples of “errors” which Nature reviewers identified and that Britannica contest. We also looked at each of these 58 errors and counted the number of errors of different kinds (factual, misleading and omissions). This is an inexact science. The crucial figure was how many were errors of omission were found—as this is one of the things I identify as a source of potential bias in the study. I counted 17 errors of omission. The Economist researcher (who is far better at this sort of thing than I am), counted 21. We decided to say, about 20 were errors of omission. The point is not to give an exact figure—because neither of us are qualified to say whether these were really errors of omission or not—but to give readers an idea of how a small but arguable systematic bias could have a big impact on the comparison.

6. 124,000 articles in Britannica

Tom Panelas “Our big online sites have about 124,000 articles in total, but that includes all of the reference sources, including our student encyclopedia, elementary encyclopedia, and archived yearbook articles. The electronic version of the Encyclopaedia Britannica proper has about 72,000 articles. The print set has about 65,000.”

7. Nature mag cooked Wikipedia study. Andrew Orlowski, The Register. March 23, 2006.

8. Small wonders. Natasha Loder, The Economist. December 29th, 2004.

Other further reading:

Supplementary information to accompany Nature news article “Internet encyclopaedias go head to head (Nature 438, 900-901; 2005)

In a war of words, famed encyclopedia defends its turf—At Britannica, Comparisons to an online upstart are a bad work of ‘Nature’. Sarah Ellison. March 24, 2006.

Encyclopedia Britannica, Wikipedia.

Encyclopaedia Britannica." Encyclopædia Britannica. 2006. Encyclopædia Britannica Premium Service. 24 Mar. 2006 (no sub required)

Wikipedia, Wikipedia.

On the cutting-room floor: quotes from sources

Nature only wanted to respond on the record in writing to queries, so I have a much shorter collection of quotes from reporter Jim Giles. Ted Pappas agreed to be interviewed on the record, and we spent a lively 45-minutes talking about the study and the nature of information.

Jim Giles, senior reporter, Nature

"Britannica is complaining that we combined material from more than one Britannica article and sent it to reviewers. This was deliberate and was clearly acknowledged in material published alongside our original story. In a small number of cases, Britannica's search engine returned two links to substantive amounts of material on the subject we wanted to review. In these cases, we sent reviews the relevant information from both links. This could, of course, make the combined article sound disjointed. But we asked reviewers to look only for errors, not issues of style. When reviewers commented on style problems, we ignored those comments. More importantly, we feel that if the review identified an error, it is irrelevant whether that error came from a single article or the combination of two entries."

When we combined Britannica articles, the point was to more fairly represent what it offered. Lets imagine you search on "plastic" and get two Britannica articles: "plastic" and "uses of plastic". But Wikipedia covers both aspects of this topics in a single entry. In that case, it is fairer to combine the two Britannica entries when making a comparison with Wikipedia. When we combined the entries we were careful to provide what we thought was a fair representation of what Britannica had to offer on a topic. This actually reduced the chance of generating errors of omission, not increased it.

Britannica’s executive editor, Ted Pappas.

“The premise is that Wikipedia and Britannica can be compared. Wikipedia has shown that if you let people contribute they will. But all to often they contribute their bias, opinions and outright falsehoods. Too often Wikipedia is a forum that launches into character defamation, these are the sorts of fundamental publishing vices that no vetted publication would fall prey to which is why it is so absurd to lump gross offenses in publishing to typos in Britannica articles. They are fundamentally different.”

“Comparing articles from children’s products to Wikipedia, and taking Yearbook articles, something written about a 12-month period, to a Wikipedia article is absurd. There is also this ludicrous attempt to justify this by saying that Nature was doing a comparison of websites. Nothing in their study intimates it was a study of websites."

“The idea of excepting a 350-word intro to a 6,000 word article [on lipids] and sending only this is absurd. And to allow reviewers to criticise us for not finding these omission in an article because they have only been given a 350-word introduction is a major flaw in the methodology. Who chooses the excerpts? Nature’s editors had no right to change these, and pass these off as Britannica articles. Anyone who had to write 350 words on lipids would approach this very differently to someone having to write 6,000”.

“We’ll never know if they were biased from the beginning. Everything from day one, their refusal to give us the data through the heights of criticism, to the pro-Wikipedia material that accompanied the release, gives us reason to suspect there was bias from the beginning.

“I’m sure there will a continuing discussion about nature and the future of knowledge and how it should be codified and consumed. There is room in this world for many sources of information, and we are confident about our place in that world and know we are not the only source, and never have been”.

Monday, March 20, 2006

The cutting edge

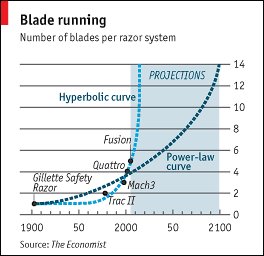

As most people will have noticed razors seem to be acquiring ever greater numbers of blades these days. The razor manufacturers, in particular Gillette, seem to like adding new blades to their razors. The latest arrival is the Gillette Fusion, which has a whopping 5 blades (6 if you count the single blade on the back of the Fusion, for 'tricky' areas).

This is, of course, largely about marketing. There isn't any real consumer demand for more blades on product, any more than there is a demand for antibacterial plastic or tounge scrapers on toothbrushes.

Nevertheless, the rate of of introduction of new blades (plotted above) does make one wonder whether there is a link with the rate at which materials technology progresses. More blades, means lighter and stronger materials have to be used. So do we have a Moore's Law for razor blades?

Various bits of analysis on this curve suggest we'll go into blade hyperdrive anytime in the next few years or so. More conservatively, by 2100, the 14-bladed razor will have arrived--whether we want it or not. Anyone care to bet that that five isn't the end of the road? With Gillette's latest arrival it has trademarked the term 'Shaving surface'. Nano blades anyone?

The cutting edge

Mar 16th 2006

A Moore's law for razor blades? (more...)

The Economist

For a hilarious take on this story, written long before Gillette announced its five-bladed razor, see The Onion, F*ck everything we're doing five blades (more...)

Tuesday, March 14, 2006

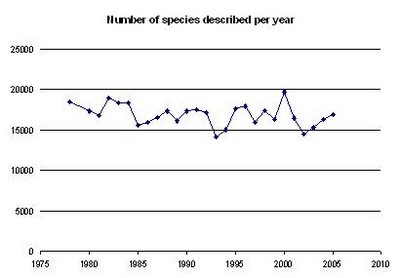

Something wrong with taxonomy

In many areas of life we are used to the idea that productivity tends to increase, as new technologies make things more efficient. So this is one of the things that makes the graph to the left quite surprising. It shows the number of new species described every year, and was based on data compiled by the journal Zoological Record*. Zoological Record scours the published literature and makes an index of all new names they find--the journal estimates it collects about 95% of what is published.

Part of the explanation is, no doubt, that in the last decade there have been large cuts to taxonomy funding. Although this hasn't occurred everywhere in the world. Plus, the numbers of species described every year in 2005, really isn't that different from the late 70s, an era where many would agree was much better for taxonomy support.

So this graph suggests is that taxonomy remains an area that really isn't making any productivity gains of the kind that one would expect in modern science. While DNA is sequenced and stitched together at ever-faster rates, and data analysed, graphed and published at rates inconceivable 15 years ago, taxonomists appear to be doing things at the same rates they always have. Time for change? Some would suggest a renaissance is needed, and that is why a group of scientists met at London Zoo on the 1st March to discuss the modernisation of taxonomy.

*The data were kindly supplied by Nigel Robinson of Zoological Record.

Monday, March 13, 2006

Vesuvian footprints

Two recent items published in The Economist:

Two recent items published in The Economist:A bronze-age burial

Mar 9th 2006

Vesuvius is more destructive than previously thought

THERE was barely any warning. To the citizens of Pompeii, the eruption of Vesuvius was a deadly surprise. Earlier in the month the town's wells had dried up, but then one afternoon a huge eruption blotted out the sky and buried the city. For archaeologists, such great human misfortune has been useful; today, Pompeii offers a remarkable snapshot of 1st-century Roman life. But it would be a mistake to think that what happened to it is typical of a Vesuvian eruption. The discovery, a few years ago, of several dozen entombed Bronze Age settlements, about 15km north-north-west of the volcano, is today showing that Vesuvius is able to devastate a far wider region than succumbed in 79AD. (more... subscription required)

The Avellino 3780-yr-B.P. catastrophe as a worst-case scenario for a future eruption at Vesuvius by Giuseppe Mastrolorenzo, Pierpaolo Petrone, Lucia Pappalardo, and Michael F. Sheridan. Proceedings of the National Academy of Sciences. To be published in the online early edition in the week March 6-10, 2006.

Dr Michael Sheridan's research pages.

Gold fingered

Mar 2nd 2006

An unexpected discovery may help explain how old arthritis drugs work

JOURNALISTS wishing to hype a medical discovery often reach for the cliché “silver bullet”. Well, here is a story where the bullets are made of gold and platinum, as well.

Those bullets' targets are a range of ailments known as autoimmune diseases. These diseases, which include juvenile diabetes, lupus and rheumatoid arthritis, are the result of the body's immune system turning on its host. Instead of recognising and attacking foreign objects, it recognises and attacks its owner's own cells. Such diseases are hard to treat and there is, as a consequence, a need to find new drugs that will suppress the parts of the immune system that generate this unwanted response—and, as he writes in Nature Chemical Biology, Brian DeDecker, a cell biologist at Harvard Medical School, thinks he may have a clue to the answer. (more...)

Thursday, February 23, 2006

The magical mining microwave

Just published in the The Guardian's weekly technology section.

Just published in the The Guardian's weekly technology section.Wave goodbye to the daily grind

Microwaving rocks to release the minerals inside could save the mining industry millions and halve its use of electricity

Natasha Loder

Thursday February 23, 2006, The Guardian

Sitting innocuously on a bench in a laboratory in Chelmsford is what has been advertised as the "world's most powerful microwave". It's a slightly grubby white plastic oven that was, apparently, bought at Currys by researchers at the technology company e2v. In anticipation, I have brought a bag of potatoes. Trevor Cross, e2v's technical director, reckons his souped-up beauty can cook a baked tatty in 0.02 seconds, although he warns that it might not really resemble a potato when it is done. It might be vapourised. (more...)

Wednesday, February 22, 2006

The aves, and ave nots

Update 24th February:

The aves, and ave nots

Feb 23rd 2006

Avian influenza is spreading to many new countries. But migrating wild birds may not be the only culprits

IN AROUND a month, bird flu has appeared in a seemingly alarming number of new countries. The disease is already endemic in the poultry flocks of much of Asia. In the face of the relentless march of the H5N1 virus around the world, fatalism is not an appropriate response. Better to look at exactly what is going on. (more...)

Editorial: Ominous

Feb 23rd 2006

Bird flu spreads around the globe

FOR most of the past three years, the highly pathogenic bird flu known as H5N1 has been found mainly in Asia. Suddenly it has arrived in many countries in Europe, triggering widespread alarm. The detection of the virus in wild birds across Europe is certainly a cause for concern, particularly to Europe's poultry farmers, who are rightfully worried that the presence of the virus in wild birds will increase the risk to their flocks. However, in the midst of a European debate about the benefits of vaccinating chickens and whether or not poultry should be brought indoors, there is a danger that far more significant events elsewhere will be overlooked. (more... subscription required)

Monday, February 20, 2006

Ecology goes open source

Last week I spent some time in Brazil, near the astonishing Iguacu Falls--easily one of the great natural wonders of the world and far more impressive than Niagara.

Last week I spent some time in Brazil, near the astonishing Iguacu Falls--easily one of the great natural wonders of the world and far more impressive than Niagara.A group of scientists know as TEAM, which stands for the Tropical Ecology, Assessment & Monitoring Initiative, met near the town of Foz do Iguaçu, from February 10-14, 2006, to discuss biodiversity monitoring. The group has a sizeable chunk of money from the foundation backed by Intel founder Gordon Moore, and TEAM's job is to find long-term ways of monitoring biodiversity across the planet.

Its harder than it sounds. Ecologists tend to work on their own, in their own particular ways and on their own favourite sites. Now, to accomplish a planetary-scale task, they have to become more "open source", by agreeing on common protocols for studying everything from vegetation to mammals, and (gasp!) sharing data. It seems that a lot of progress was made at the meeting. Even slightly cynical scientists seemed to think that TEAM had found a good recipe for sucess, even though the meeting, according to one, had had "all the hallmarks of disaster" before it started.

One of the stars at the meeting was a gentleman called Scott Brandes, who works for TEAM, and who has come up with a portable and easy way of doing acoustic monitoring--that is, listening to and identifying insects by the sound that they make. It has so thrilled some of the other scientists there that the technique looks likely to be taken up by the primatologists--who want to use it for monitoring nocturnal mammals. To hear more about this, listen to a report for Science in Action on the BBC's World Service this Friday (and repeats), or via the listen again on the programme's web page.

Saturday, February 18, 2006

Animal names

Editorial

Names for sale

Feb 9th 2006

The ancient science of taxonomy might benefit from a little modern marketing

CALLICEBUS AUREIPALATII is a Bolivian monkey whose biggest claim to fame is that its name came by way of an internet auction. It was purchased last year by a Canadian online casino for $650,000; and thus the Golden Palace monkey came into the scientific literature and Bolivian conservationists hit the jackpot.

If that all sounds a bit infra dig, the facts of the matter are that millions of animals are in need of names and that taxonomists require all the inspiration they can get. Frequently they name their discoveries after each other, after members of their families and (at least in the days when private patronage financed collecting expeditions) after the rich dilettantes who paid the bills. But that leaves plenty of critters without a moniker, so the net has been cast wider. (more...subscription required).

Taxonomy

Today we have naming of parts

Feb 9th 2006

A global registry of animal species could shake up taxonomy

AT THE moment, the department of entomology at London's Natural History Museum is being rearranged, by bulldozer. It isn't a bad emblem for the broader changes transforming the science of taxonomy. Walls and ceilings are being torn down, and the tatty furniture has been thrown out. Change was due, because nearly 250 years after Carl von Linné, a Swedish naturalist, invented the modern system of naming living creatures, taxonomists still have no official list of all the animals discovered so far. This makes the work of biologists, ecologists and conservationists—who rely on species names to know just what it is they are studying and conserving—more difficult than it need be. (more...)

Wednesday, February 08, 2006

What's in a name?

This Friday, we'll be publishing an article and an editorial on taxonomy. Its about the proposal to create an official register of animal names--known as Zoobank. Already a few little gems from the article are strewn on the cutting room floor. One of them is an interview with Doug Yanega, an entomologist and museum curator at the University of California, who believes taxonomy has become too cumbersome. He told me, “The man who got me started in my career worked for 30 years on a bee subgenus that was so large and unwieldy that he died before publishing a single revisionary or descriptive work.” An official master list that was published by taxonomists would make the process of describing new species and finding supporting material easier, he adds, and make taxonomy more exciting and easier to pursue. Furthermore, anyone with an interest in a particular group of animals would know exactly who works on it and what the latest information is.

This Friday, we'll be publishing an article and an editorial on taxonomy. Its about the proposal to create an official register of animal names--known as Zoobank. Already a few little gems from the article are strewn on the cutting room floor. One of them is an interview with Doug Yanega, an entomologist and museum curator at the University of California, who believes taxonomy has become too cumbersome. He told me, “The man who got me started in my career worked for 30 years on a bee subgenus that was so large and unwieldy that he died before publishing a single revisionary or descriptive work.” An official master list that was published by taxonomists would make the process of describing new species and finding supporting material easier, he adds, and make taxonomy more exciting and easier to pursue. Furthermore, anyone with an interest in a particular group of animals would know exactly who works on it and what the latest information is.Dr Yanega also had some fascinating comments on the problems he faces, and how some kind of official list of animal names might help:

"As it stands presently, all taxonomists have a fundamental problem in simply keeping track of all the literature, old taxon names, and other miscellania associated with their group of interest. It's not that it's completely unmanageable, but it does have two real impacts: (1) it slows things down and compels one to "scale down" [taxonomic projects can't get too ambitious]... and (2) it creates a barrier to anyone attempting to "break into" a taxonomic group for which there is no surviving expert who has done all the legwork...both problems will be greatly reduced by having a Registry - coming out of the spin-offs that such a Registry will facilitate, such as a digital library of original descriptions and revisionary works, and a database linking taxon names to institutional holdings of those taxa, and digital libraries of images of type specimens.

A single authoritative list is the requisite foundation for any of these more ambitious undertakings - the reality of this conclusion can be seen easily enough, by looking at which taxa in the world already *have* some of these resources developed: they are all cases in which the underlying list of taxa has already been worked out and made public - small, well-defined groups that have a relatively high proportion of taxonomists to taxa (like fish, or birds, etc.).

As an entomological taxonomist, whose general sphere of activity encompasses over 90% of the known taxasphere, and who - even when narrowly focused - can find himself dealing with a single genus that contains more species than the entire Mammalia, the prospect of ever having such a set of resources that I can use is still daunting, though, and cannot even be dreamt of until and unless we have a master list of all their names. For people who work on fish, or birds, or dinosaurs, a Registry might be a fairly trivial addition to the tools at their disposal, but for the rest of us working on all the *other* life forms, it's anything but trivial.

Even worse, I'm not just an entomologist, but a *museum curator*, which means that I can, potentially, have to deal with ANY of the over 1 million named insects, and have to track down any of the over 6 million published names applied to those species. If I had a master list, I would be able to simply check off our holdings against that master list, and could thus fairly readily inform the world at large what, precisely, my collection contains. At present, were I to simply compile a list of taxon names as they appear in our collection at present, at least a quarter would probably be incorrect, and I have no easy way of making the necessary corrections. Extend that to every other museum curator in the world, and we're not talking just simple bookkeeping, but a major tool for networking and data sharing."

Friday, January 27, 2006

A sale of moon dust

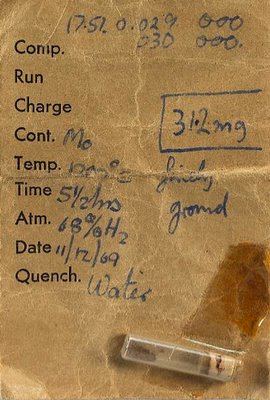

Lot 195, on sale at London auctioneer Bonhams earlier this week: Apollo 11 moon dust. Now you don't see that every day. When I called Bonham's to ask where it came from, the press officer said, "the moon".

Lot 195, on sale at London auctioneer Bonhams earlier this week: Apollo 11 moon dust. Now you don't see that every day. When I called Bonham's to ask where it came from, the press officer said, "the moon".Well it actually came from a laboratory technician and discussions are underway between Bonhams, NASA, and the vendor to figure out who really owns it. My bet is that it belongs to the U.S of A.

In the picture on the left, the dust sample is on the right hand side of the tube. On the other end of the tube is a cork. The sample appears to be sellotaped to the laboratory sample sheet.

Read the full article in this week's Economist:

A fall of moon dust

Jan 26th 2006

A sample collected by Apollo 11 almost goes on sale

“LOT 195 has apparently gone back to the moon. It has been withdrawn from sale.” Thus spake Jon Baddeley, head of scientific instruments at Bonhams auctioneers in London on January 24th." (more...)

Saturday, January 21, 2006

Omega-3s are good for pregnant women

This week I wrote a story about how pregnant women may be able to make their children happier, healthier and smarter by eating more fish. This was picked up quite widely in the media.

This week I wrote a story about how pregnant women may be able to make their children happier, healthier and smarter by eating more fish. This was picked up quite widely in the media.The research was reported by an American NIH researcher Joseph Hibbeln, working with a British scientist Jean Golding. The results were important because pregnant women have been warned off fish by two federal agencies in the US. This advice has since been taken up in many other countries around the world. This research suggests that this is wrong. Yes fish has trace amounts of mercury, and mercury is bad for an unborn child, but omega-3s are so essential that avoiding fish is detrimental to the child’s health.

For the worried, it is possible to buy omega-3 supplements where mercury has been removed. But supplements from a vegetable source such as flax oil will only contain one type of omega-3 and I understand that pregnant women want to be consuming all three. So fish oil, or marine algae extract with the three types of omega-3, are the thing to consume.

This research comes hot on the heels of much research that says omega-3s are vital to adult health and can affect depression, cardiovascular disease and a whole bunch of other things. Perhaps the time has come for governments to consider setting an RDA for this nutrient? As our knowledge of nutrients grows, so does the list of things that we recognise to be essential. These days folic acid is added to our cornflakes because it is seen as essential, the same may, one day, happen with omega-3s.

Anyone worried about overfishing, but want to eat fresh fish should buy small fish that reproduce rapidly and are less likely to be overfished, sardines, herring, mackerel and skipjack tuna. Waitrose in the UK sells a wide range of fish that is certified from sustainable fisheries, and there is also the American

There is one final, interesting, twist to this tale. Another esential nutrient, omega-6s, taken in abundance, can strip the body of omega-3s. The main omega-6 molecule is linoleic acid, and comes from seed oils such as sunflower, soy and corn (or maize) oil. So another strategy for boosting omega-3s is to eat fewer omega-6s--of which there are rather a lot in the western diet. Fewer chips or fries. Fewer fryups unless its in olive oil, or.. lard!

It turns out that there is a strong relationship between the amount of linoleic acid consumed in a diet and homicide rates, both within and between countries. One idea about omega-3s that might help explain this relationship, is that omega-3s are essential for the correct wiring of the brain, and help us to contain our more violent impulses. I mean you never see a fish-loving Japanese guy dressing up in bling and engaging in a bit of a gang shootout.

I digress. So the arrival of linoleic acid in our diet is possibly the biggest single change in the human diet since the year dot. Americans get a whopping 10% of their calories from this single molecule.

The omega point

Jan 19th 2006

From The Economist print edition

Omega-3 fatty acids are a crucial component of a healthy diet—particularly, it seems, for pregnant women wanting bright, sociable children. (more...)

Food for thought

Jan 19th 2006

From The Economist print edition

In praise of omega-3s (more... sub req)

Thursday, January 12, 2006

Astronomers in massive planet cover up!

Next week, the New Horizons mission to Pluto is due to take off. On its journey to the ninth planet one would imagine that the folks at NASA will find out lots about the tens of thousands of icy objects way out in the Kuiper belt, including that Pluto is just one of many similar-sized objects out there. Along with the recently discovered UB313, might there be hundreds of objects discovered that are the size of Pluto?

Next week, the New Horizons mission to Pluto is due to take off. On its journey to the ninth planet one would imagine that the folks at NASA will find out lots about the tens of thousands of icy objects way out in the Kuiper belt, including that Pluto is just one of many similar-sized objects out there. Along with the recently discovered UB313, might there be hundreds of objects discovered that are the size of Pluto?All this raises the question, once again, of what exactly is a planet. Last year, the discovery of UB313 caused astronomers to rush off to decide, once and for all, what the difference is between a planet and a not-planet. This was reported in Nature in August last year (see here). They might, says the report, decide in a week. Five months later, I decided to ring up the astronomers for a progress report.

There organisation responsible for deciding what a planet is is the International Astronomical Union, and all the head-honcho astronomers are to be found in orbit around it. One of its vice presidents explained, sorrowfully, that it all turned out to be a bit problematic.

So I called the man who headed the committee. (Remember, these are the guys at college who did double maths and physics because it was easy.) So it came to pass that I chatted with Iwan Williams, a charming astronomer at Queen Mary College. The board, he said, produced some recommendations, he said, but couldn’t decide what the definition of a planet was.

It turned out to be a difficult problem, he said. How hard could it be? I asked in amazement. Well, he said, the only thing the astronomers could agree on was that there is a difference between things that are able to hold themselves together and be spherical under the weight of their own gravity and other lumpy objects such as an asteroid and the nucleus of a comet. I wasn’t impressed with progress on the planet/not-planet issue. OK, I say, so what’s the problem defining a planet as something where gravity makes the object spherical? The problem, he replied, is that if you use this definition you get too many planets.

“Too many planets!” I exclaimed, “how many is too many?”

“Well, about 30” he said sheepishly.

“But who are you to say what the right number of planets are in the solar system?”

It turns out that astronomers quite like there being a small number of planets, it makes them special. Planetary astronomers particularly, one presumes, have a sense of superiority over their co-workers who study mere asteroids and minor moons. And it might upset the public, as well, which has gotten used to this nice nine-planet solar system. The Earth seems so much special as one of nine. But one of 30?

So later this year, at a big IAU punch up, I meet conference, in Prague, they'll have to decide. Sounds like somehow they'll make sure that we all get the "correct" number of planets that we've come to expect rather than anything that would disturb today's vision of the universe. So much for paradigm shifts and scientific revolutions. Crikey, Copernicus will be turning in his grave.

Latest: one reader writes to suggest Pluto be renamed: "the object formerly known as Pluto"

Postcards from the edge

Jan 12th 2006

From The Economist print edition

A greater understanding of the Kuiper belt will fuel uncertainty over what, exactly, a planet is

ONCE upon a time, people thought that the solar system consisted of four small, rocky inner planets, four large, gassy outer planets and an odd little runt called Pluto. Since the early 1990s, though, almost a thousand other runts have been discovered in the region of the solar system called the Kuiper belt, where Pluto resides. Most of these objects are a lot smaller than Pluto, but a few are of similar size and one called 2003 UB313 is larger. (More... no sub req)